-

And the rest as they say… (a manifesto for Techno-enviro-cultural-socioeconomic-politics)

By Kayt Button When we think about historical research, it is easy to picture someone trapped behind piles of dusty literature and papers, getting lost in the minutiae of their chosen subject. After all, years of study of history have preceded their final, chosen, specialised subject of “The Pig War of 1859”!

-

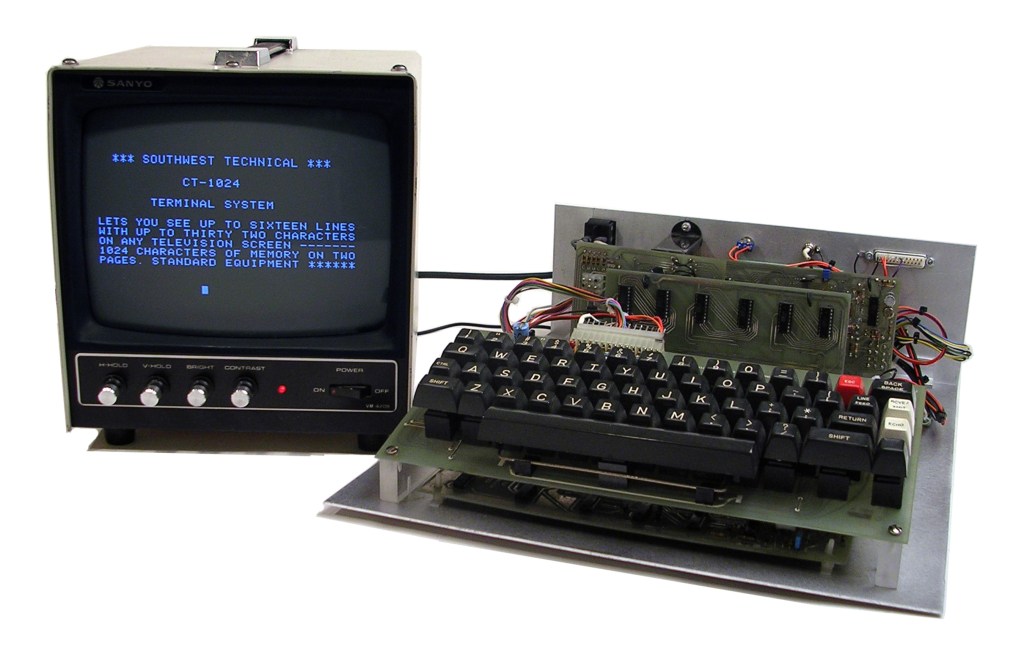

Reflections on Making ‘Big Data’ Human

By Emily Ward @1066unicorn and Carys Brown @HistoryCarys If there was one thing that the Making Big Data Human conference made clear, it was that ‘Big Data’, and indeed digital methodologies in general, provide some very exciting opportunities to advance historical research. From the ambitious and wide-ranging National Archives’ Traces Through Time project, which looks…

-

Fostering Research Communities

By Matt Tibble on behalf of Inciting Sparks @IncitingSparks ‘Public engagement’ and ‘research communities’ – these are the new buzzwords from the Arts and Humanities Research Council, one of the largest funding bodies for historical research in the UK. Their message is that the gulf between the ivory tower of academic research in higher education institutions and…